Last year, a security researcher remotely unlocked, started, and tracked a Kia – any Kia made after 2013 – using nothing but a license plate number. No special hardware. No physical access. Just a flaw in a web API that nobody on the development side had thought to test.

I keep coming back to that story because it captures something important about where we are right now with connected systems. We’re building incredibly sophisticated AI – perception models, agentic workflows, AR interfaces – and we’re plugging it into vehicles, medical devices, and industrial systems. But the security thinking hasn’t kept up. Not because people don’t care, but because AI teams and security teams still largely operate in different worlds.

This article makes a simple case: if you build AI systems, especially those that interact with the physical world, you need to start thinking like a hacker. Not to break things, but to build them better. And the automotive industry offers some of the most compelling and alarming lessons in why this mindset matters.

Cars Are Now Software Products with Software Problems

Not long ago, vehicle security meant a steering wheel lock. Today, a modern car processes data from dozens of sensors, connects to cloud services, communicates with infrastructure and other vehicles, and receives software updates while parked in your driveway.

McKinsey estimates that connectivity services, shared mobility, and software-driven upgrades could expand automotive revenue by up to $1.5 trillion by 2030. That’s a massive incentive to connect everything to everything. But every new connection is also a door, and not all of them are locked.

The costs are already real. In 2024, the automotive industry faced an estimated $22.5 billion in cyberattack-related damage, according to VicOne’s annual report: $20 billion in data leakage, $1.9 billion in system downtime, and over $500 million in ransomware alone. Upstream Security counted more than 100 ransomware attacks and over 200 data breaches targeting the automotive and smart mobility ecosystem that same year.

Ransomware is a type of malicious software (malware) that blocks access to files or entire systems by encrypting data or locking devices. The attackers then demand a ransom in exchange for restoring access. Victims typically see an extortion message with payment instructions, often requesting payment in cryptocurrency.

When a License Plate Is All You Need

The Kia vulnerability is worth looking at more closely, because the technical details reveal just how basic the failure was.

Security researcher Sam Curry and his team found that Kia’s connected vehicle platform allowed them to remotely control key vehicle functions- lock, unlock, start, stop, and track- through flaws that any penetration tester would recognize as textbook web application bugs. The attack worked regardless of whether the owner had an active connected services subscription. Worse, it let the attacker silently add themselves as an invisible second user, with no notification sent to the real owner.

A few months later, Curry found a similar pattern in Subaru’s STARLINK service. The administrator console had a password reset endpoint that required no confirmation token, and a two-factor authentication prompt that could be bypassed by simply removing a client-side overlay. Through that, the researchers gained unrestricted access to vehicles and customer accounts across the United States, Canada, and Japan. They could pull a full year of location history, accurate to within five meters, updated every time the engine started.

Both manufacturers patched the issues quickly after responsible disclosure, and there’s no evidence anyone exploited them in the wild. But here’s what sticks with me:

The most dangerous vulnerabilities weren’t in the vehicle at all. They were in the APIs, web portals, and backend systems that connect to it.

The car itself was fine. The software around it was wide open.

The pattern of a sophisticated product but unsecured plumbing shows up everywhere in AI projects too. And it’s exactly the kind of gap you only see if you’re deliberately looking for it.

I’ve seen this in practice. An LLM integration with genuinely solid prompt architecture, careful output filtering, solid user-facing design – and an unauthenticated internal API endpoint that could override the system prompt entirely. Nobody had tested it because it wasn’t in scope for the usual QA process. It wasn’t even on anyone’s radar as a security concern. It was just how the backend was wired during a fast prototype phase that never got revisited.

Image source: Photo by Mo Eid from Pexels https://www.pexels.com/photo/orange-sports-coupe-2631489/

The AI Layer: A New Class of Attack Surface

As vehicles integrate more artificial intelligence for perception, navigation, driver assistance, and increasingly for AR-enhanced interfaces, a fundamentally different category of risk emerges: adversarial AI attacks.

What Are Adversarial AI Attacks?

Unlike traditional software bugs, adversarial attacks exploit the way machine learning models interpret data. Small, carefully crafted perturbations to input data can cause AI systems to misclassify objects, miss obstacles, or make dangerous decisions – all while appearing to function normally.

How do adversarial attacks target vehicles?

| Attack Type | What It Does | Real-World Example |

| Adversarial patches | Physical stickers or markings that cause object detection models to misclassify or ignore objects | Researchers have demonstrated patches that make srop signs invisible to YOLO-based detection systems (YOLO refers to a specific real-time object detection algorithm used in computer vision) |

| Sensor spoofing | Injecting false data into LiDAR, radar, or camera feeds | Simulated LiDAR (Light Detection and Ranging) attacks can create phantom obstacles or hide real ones from autonomous driving systems |

| Data poisoning | Corrupting training datasets to embed backdoor behaviours in models | A poisoned dataset could cause a model to misidentify specific objects under specific conditions |

| Model inversion | Extracting private training data from a deployed model | Attackers could reconstruct sensitive driving patterns or user data from an exposed model endpoint |

A 2025 study from Springer reviewing over 170 papers on adversarial attacks against autonomous vehicles found that even advanced deep learning systems remain significantly vulnerable, particularly when attacked in real-time scenarios. The researchers noted a critical need for more realistic security evaluations and for development teams to adopt defense strategies early in the design process, not as an afterthought.

Another 2025 research effort deployed a Perception Streaming Attack (PSA) framework on an NVIDIA Jetson edge device (the same type of hardware used in actual vehicles) and demonstrated that it could effectively erase over 76% of objects that should have been detected by the perception module, running at 12 frames per second. That’s fast enough to operate in real driving conditions.

Why Do Adversarial AI Attacks Matter for AI Teams and Not Just Car Makers?

These vulnerabilities aren’t exclusive to vehicles. They reflect a broader pattern in how AI systems are built across industries. Any team deploying machine learning models that process real-world data, whether for autonomous driving, e-commerce personalization, or conversational AI, should be sitting with these questions:

- How robust is our model against adversarial inputs?

- Could someone manipulate our training data to introduce hidden biases or backdoors?

- What happens when our model encounters inputs it was never designed to handle?

- Are our APIs and data pipelines secured as carefully as our models?

If you’re building AI systems and you haven’t asked these questions, you’re not thinking like a hacker yet. And honestly, you probably should be – because someone else already is.

Regulation Is Catching Up – Fast

The automotive industry’s cybersecurity wake-up call has also triggered a global regulatory response. The most significant development is UN Regulation No. 155 (R155), adopted by the UNECE World Forum for Harmonization of Vehicle Regulations (WP.29).

R155 requires vehicle manufacturers to implement a certified Cybersecurity Management System (CSMS) that covers the entire vehicle lifecycle, from development through production and post-production. Since July 2024, compliance has been mandatory for all new vehicles sold in the European Union.

The regulation defines 69 specific cyber threats and vulnerabilities across seven categories and requires 23 mitigation measures. It demands that manufacturers demonstrate they manage cybersecurity risks across their entire supply chain, detect and respond to incidents in real-time, and provide secure software update mechanisms.

Global adoption is accelerating

The EU made R155 mandatory for all new vehicles in July 2024. Japan transposed the regulation in 2021 and has been fully compliant since. South Korea is developing independent but R155-aligned standards. China published GB 44495:2024, mandatory for new vehicle types from January 2026. The UK is in active consultation on national adoption, while the United States currently operates on voluntary NHTSA guidelines, though the Commerce Department has proposed a ban on Chinese and Russian vehicle software.

The companion standard ISO/SAE 21434 provides the engineering framework for cybersecurity risk management that underpins R155 compliance. Together, they represent the most comprehensive regulatory push for cybersecurity-by-design that any industry has seen.

What Can AI Teams Learn from Automotive Regulation?

The principles behind R155 aren’t automotive-specific. They represent a mature framework for thinking about security in any system where software meets the physical world.

- Threat Analysis and Risk Assessment (TARA) is a mandatory first step: not optional, not something you do after the MVP ships.

- Security must span the full lifecycle, not just at launch.

- Supply chain accountability matters: you’re responsible for every component, including third-party libraries and models.

- Continuous monitoring is expected: deploying a system isn’t the end; it’s the beginning of your security responsibility.

- And incident response planning is built in: the assumption is that breaches will happen, and preparation is required.

As AI regulation evolves globally, from the EU AI Act to sector-specific frameworks. Engineering teams that already think in these terms will be better prepared than those scrambling to retrofit security into systems designed without it.

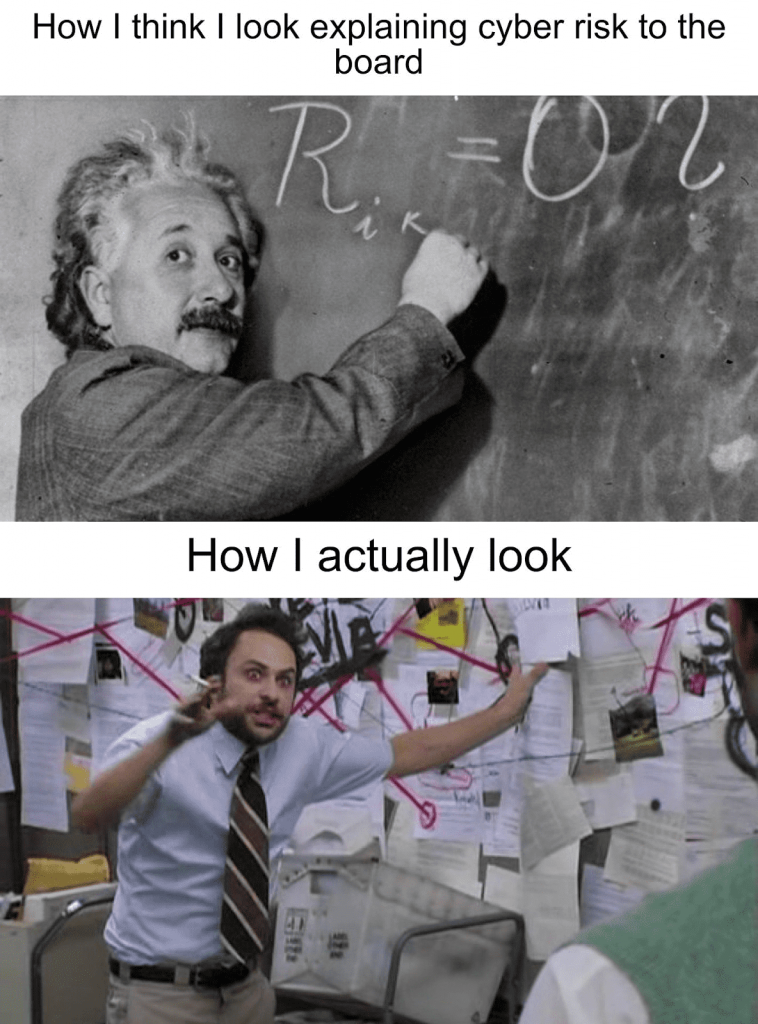

The Hacker Mindset: What It Actually Looks Like

Let me be clear: thinking like a hacker doesn’t mean learning to break into things. It means training yourself to see the gaps – the space between what a system is supposed to do and what you could force it to do if you tried. Security folks call this understanding your attack surface, and it’s a habit every AI engineer ought to pick up.

Old mindset vs. hacker mindset

| Traditional AI Thinking | Hacker Thinking |

| “Our model hits 98% accuracy on the test set” | “What inputs would make the other 2% blow up spectacularly?” |

| “We check data quality during training” | “Could someone poison the data upstream and we’d never notice?” |

| “Our API requires authentication” | “What if someone gets a valid token through a path we didn’t think of?” |

| “We use encrypted connections” | “What’s exposed if someone hits the backend directly?” |

| “Our OTA updates work great” | “Could a man-in-the-middle push a malicious update instead?” |

| “The model runs locally on-device” | “Can someone rip out the weights and reverse-engineer the whole thing?” |

Building a security-first culture (which is harder than it sounds)

The most effective cybersecurity programs don’t rely on hiring dedicated security teams alone. They embed security awareness into every engineering role. For AI and data teams, this means a few concrete things.

Include threat modeling in your design process.

Before writing the first line of code, map out who might want to attack your system, what they could gain, and where the weakest points are. The STRIDE framework (Spoofing, Tampering, Repudiation, Information Disclosure, Denial of Service, Elevation of Privilege) is a good starting point. It’s not perfect (no framework is) but it forces a conversation that otherwise doesn’t happen.

Test adversarially, not just functionally.

Standard unit tests verify that your system does what it should. Adversarial testing verifies that it doesn’t do what it shouldn’t. Introduce fuzzing, boundary testing, and adversarial example generation into your CI/CD pipelines. This is easier said than done, especially on timelines that are already tight, but even a few hours of structured adversarial review will surface things that functional testing misses entirely.

Secure the full pipeline, not just the model.

Data collection, annotation, storage, training infrastructure, deployment of APIs, monitoring dashboards: every link in the chain is a potential target. A compromised annotation pipeline can introduce subtle biases that no amount of model testing will catch. This is an area where I think the field is genuinely underprepared; the tooling for ML pipeline security is still maturing, and best practices are nowhere near as established as they are for application security.

Assume your perimeter will be breached.

Design systems with defense-in-depth: multiple layers of security so that a single failure doesn’t cascade into a complete compromise. This is exactly what automotive manufacturers learned the hard way: a vulnerable web portal shouldn’t give access to vehicle controls. The same principle applies to AI systems where a compromised API endpoint shouldn’t expose your model’s training data or system prompts.

Stay current on emerging threats.

The threat landscape evolves continuously. Subscribe to advisories, follow security researchers, participate in communities. What seems theoretical today can become a practical exploit tomorrow; the Kia vulnerability that sounds almost too simple to be real was operating in the wild as recently as last year.

Image source: https://www.asylas.com/wp-content/uploads/2022/12/einstein-security-meme.png

The Road Ahead: How Does the Automotive Industry’s Cybersecurity Evolve?

The automotive industry’s cybersecurity journey offers a preview of what every AI-intensive sector will face as systems become more connected, more autonomous, and more integrated into daily life. It’s not a perfect analogy; AI systems fail differently than vehicles, and the regulatory landscape is different. But the structural challenge is the same: you’re deploying complex software into environments you don’t fully control, against adversaries who are actively looking for gaps.

Consumer expectations are already shifting. According to RunSafe Security’s 2025 Connected Car Cyber Safety Index, only 19% of connected car owners feel their vehicle is adequately protected, and 79% say protecting their physical safety from cyberattacks matters more than protecting their personal data. The trust issue is real, and it’s ahead of the industry’s ability to address it.

AI will get there too. At some point – probably sooner than most people expect – AI security incidents will become prominent enough that users and regulators start asking hard questions. The teams that will be in the best position then are the ones treating security as a design constraint today, not a compliance checkbox later.

For data and AI teams, the takeaway isn’t about cars specifically. It’s about a universal principle: the more intelligent and connected a system becomes, the more carefully its security must be designed. Not bolted on after launch. Not being handled by a separate team. Designed in, from the start, by engineers who understand that building great AI also means thinking about how it can be broken.

Because the question isn’t whether your system has vulnerabilities. It’s whether you’ve looked hard enough to find them before someone else does.

And if your heart beats for automotive topics, continue reading here: is gamified augmented reality the future of automotive UX?

Sources

1. Upstream Security, “2025 Global Automotive & Smart Mobility Cybersecurity Report”, 2025

2. Sam Curry, “Hacking Kia: Remotely Controlling Cars With Just a License Plate”, samcurry.net, September 2024

3. Sam Curry, “Hacking Subaru: Tracking and Controlling Cars via the STARLINK Admin Panel”, samcurry.net, January 2025

4. RunSafe Security, “2025 Connected Car Cyber Safety & Security Index”, 2025

5. VicOne, Automotive Cybersecurity Annual Report, 2024

6. A.D. Mohammed Ibrahum et al., “Deep learning adversarial attacks and defenses in autonomous vehicles: a systematic literature review from a safety perspective”, Artificial Intelligence Review, Springer, 2025

7. ScienceDirect, “Cross-task and time-aware adversarial attack framework for perception of autonomous driving”, Pattern Recognition, 2025

8. UNECE WP.29, UN Regulation No. 155 — Cyber Security Management System (CSMS)

9. ISO/SAE 21434:2021, “Road vehicles — Cybersecurity engineering”

10. McKinsey & Co., Connected Vehicle Revenue Projections, 2024

11. Joe Saunders (RunSafe Security), “Cybersecurity Is the New Safety Standard for Connected Cars”, Design and Development Today, September 2025