In March 2026, the BBC reported on what it called “the race to establish an AI-free logo”. At least eight different organizations worldwide, spanning the UK, Australia, and the United States, are now developing competing certification schemes for products created without artificial intelligence. Labels like “Proudly Human”, “No AI”, and “Human Written” are appearing on films, books, websites, and marketing materials.

This is a legitimate and interesting market signal. But if you are a product manager or a technology leader, the consumer angle is only the surface of the story. The more consequential question is what claiming/verifying a “human-made” label actually requires at the product level, and what that commitment does to your development processes, your tooling decisions, and your accountability structures.

Why the Label Question Is Harder Than It Looks

The intuitive definition of a “human-made” product is simple: humans did the work, not machines. But once you move beyond handcrafted physical goods into digital products, software, data pipelines, or AI systems, the definition immediately starts to fracture.

AI Research Scientist Sasha Luccioni has put it plainly: AI is now so embedded in everyday tools and platforms that establishing a clean line between “AI-assisted” and “AI-free” is technically complicated. Spell checkers, autocomplete systems, grammar assistants, compiler optimization tools, cloud infrastructure with automated scaling: all of these involve machine intelligence at some level. The binary framing does not hold.

The more useful question is not AI vs. no AI, but rather: at which stages of the product lifecycle, and for which categories of decision, does the label make a meaningful claim?

| The Spectrum Problem AI certification bodies are already moving toward a tiered model rather than a binary one. The most credible emerging frameworks distinguish between: – human-written (no generative AI involved in core creation); – human-led, AI-assisted (humans owned the process, AI contributed to execution); – and AI-generated, human-reviewed (AI produced the primary artifact, humans validated it). The challenge is that “generative AI” itself is not a fixed category – it depends on the tool, the task, and the level of human override. |

The Embedded Tool Problem: Where Is the Line for Software Products?

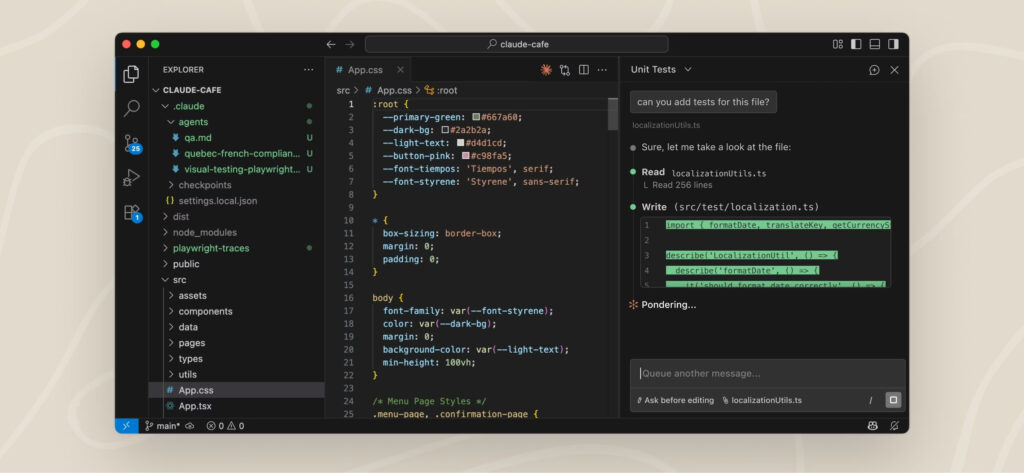

Consider a realistic scenario for a software or data product team in 2026. Your developers use GitHub Copilot or Claude Code for code completion, refactoring, and test generation. Your data engineers use Databricks Genie for natural-language querying and notebook assistance. Your documentation is generated with AI writing tools and then edited by humans. Your QA pipeline uses AI-based anomaly detection.

Does any of this disqualify the product from a “human-made” label?

The answer depends entirely on how you define the label, and current definitions are far from settled. By the end of 2025, roughly 85% of professional developers reported regular use of AI coding tools, with more than half using them daily. GitHub Copilot alone had over 20 million all-time users. Tools like Claude Code and Genie are not edge-case assistants; they are embedded into the standard professional workflow. If using these tools counts as “AI involvement”, then virtually no software product built today would qualify as human-made under a strict interpretation.

Image Source: via Claude

This is why several emerging certification initiatives have narrowed their scope specifically to generative AI: tools that produce text, code, images, audio, or video from prompts, at the level of primary content creation. Under this framing, using Copilot for inline code suggestions sits in a grayer zone than using an AI system to autonomously generate the architecture, write the codebase, and produce the documentation with minimal human direction.

| More likely to qualify as human-made | More likely to require explicit disclosure |

| Developers using AI for autocomplete and refactoring suggestions within human-directed code | AI autonomously generating architectural decisions, full modules, or complete codebases with light human review |

| AI-assisted grammar or spell checking on human-authored documentation | AI generating primary documentation, specifications, or user-facing content from prompts |

| Automated testing and CI/CD pipelines flagging issues for human resolution | AI making autonomous deployment, rollback, or configuration decisions without human approval |

| AI-powered infrastructure scaling with human-set parameters | AI systems trained on proprietary data generating outputs that feed directly into the product without human validation |

What a “Human-Made” Commitment Actually Changes in Your Product Lifecycle

Here is the part that matters for product managers: if your organization decides to claim a human-made label, whether for regulatory, commercial, or ethical reasons, it is not a marketing decision. It is a process constraint that propagates upstream through your entire development lifecycle.

1. Requirements and Scoping Phase

The first impact is at the point where AI tools assist with requirement gathering, customer insight synthesis, or product specification. If your PM team uses AI to summarize user research, cluster feedback themes, or generate feature specifications, this needs to be declared and classified. The question is not just whether a human reviewed the output, it is whether the human added material judgment, or simply approved what the system produced.

2. Design and Architecture

AI-assisted design tools, from UI generation to architecture diagram synthesis, are increasingly used in the early product phases. A credible human-made label would require documenting what was generated vs. what was designed, and ensuring that core structural decisions originated with human expertise. This has direct implications for IP ownership and accountability: if an architectural choice was AI-suggested and then human-approved without deep review, and it later causes a security or performance failure, the accountability question becomes complicated.

3. Development and Code Generation

This is where the embedded tool problem is most acute. A product team using Claude Code or Genie at scale would need to establish:

- what percentage of the codebase was AI-generated vs. human-authored

- which components were written under human direction vs. produced autonomously

- whether human review of AI-generated code was substantive or cursory

None of these are currently tracked by standard development workflows and claiming a label would require tooling and governance that most teams do not have in place.

4. Testing and Validation

AI-generated test suites and automated QA pipelines introduce a recursive question: if the tests were generated by the same tools that generated the code, what does human validation actually mean? A credible label would require that validation decisions, including the definition of what constitutes acceptable performance, remain under documented human ownership.

5. Documentation and Communication

Product documentation, API references, and user-facing content are among the highest-risk areas for undisclosed AI generation. Several AI-free certification schemes have begun specifically here – partly because generative AI in text is the easiest to detect with current tooling, and partly because documentation directly affects user trust. A human-made label applied to a product whose documentation was AI-generated without disclosure creates a meaningful credibility gap.

| The Accountability Shift The most operationally significant consequence of a human-made label is not what it restricts, it is what it requires you to document. To claim the label credibly, you need an audit trail of human decision-making at each stage: who made which call, with what information, and with what level of AI assistance. This is precisely the kind of process discipline that most agile teams have not built for, because AI tools were adopted incrementally and informally. The label forces the question: can you prove it? |

The Certification Landscape: What Exists and What Is Missing?

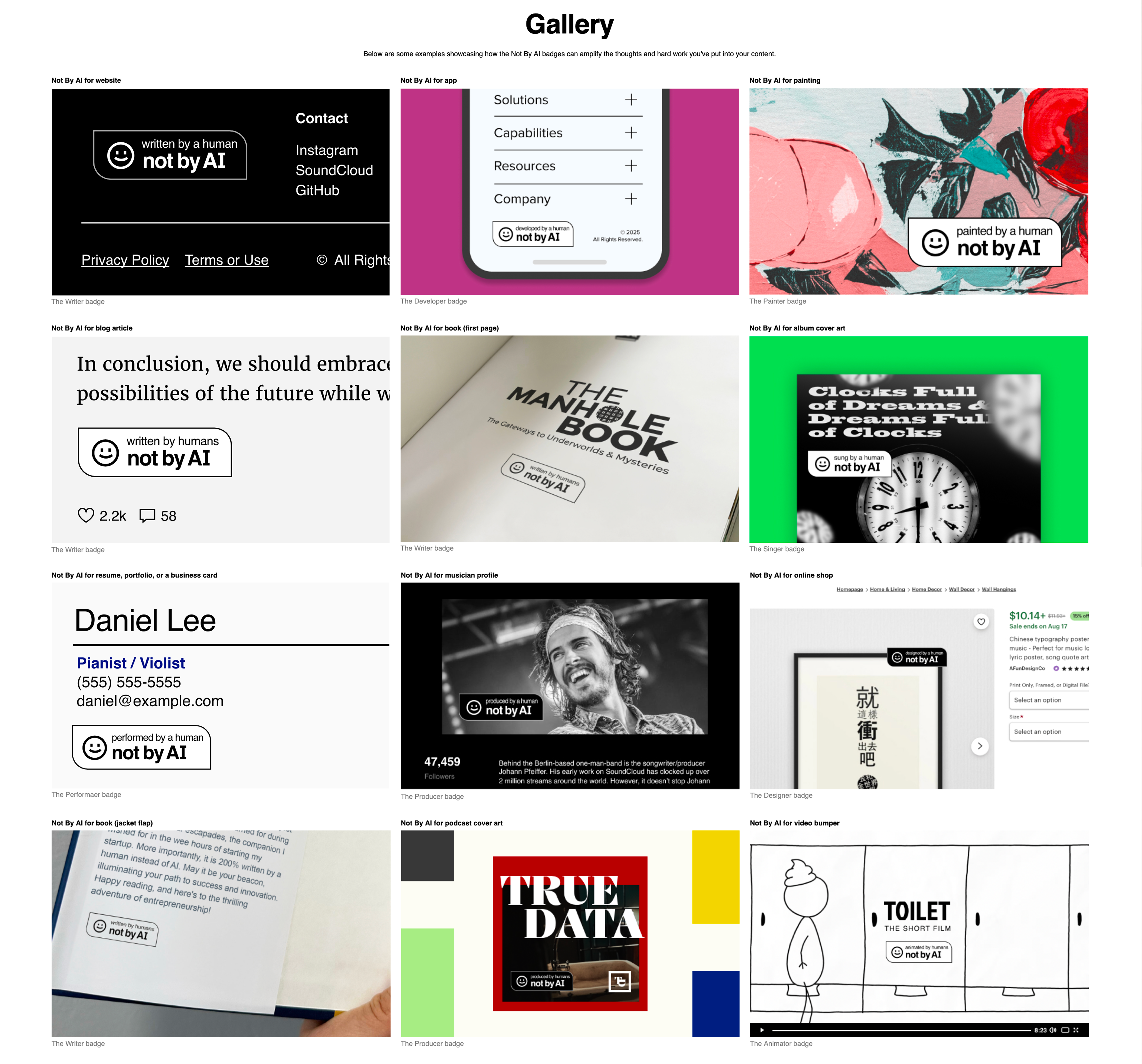

As of early 2026, the certification ecosystem is fragmented. At the least rigorous end, platforms like no-ai-icon.com and notbyai.fyi allow creators to self-apply a badge with minimal or no external verification. At the more rigorous end, services like aifreecert and Proudly Human combine professional auditors with AI-detection software and charge fees for vetting. In publishing, companies like Books by People require questionnaires and periodic sampling of submitted work.

Image source: Screenshot of Not By AI’s website, https://notbyai.fyi/gallery.

The fragmentation is a problem. Consumer researcher Dr. Amna Khan at Manchester Metropolitan University has noted that competing definitions of what is “human-made” are already confusing the market, and that a universal standard is necessary to build real trust. The Fair Trade certification, often cited as the aspirational model, took decades to consolidate from a field of competing ethical trade schemes, and it had the structural advantage of certifying physical supply chains rather than creative or digital processes.

“Made by humans” certification in software and data

For software and data products, the certification challenge is even more complex. Physical supply chains have established traceability infrastructure: provenance records, audit frameworks, chain-of-custody standards. Digital product development lacks an equivalent. There is no standard audit trail for AI involvement in a codebase, no industry-agreed taxonomy for categorizing AI contribution levels, and no third-party body with the technical depth to audit complex software systems for AI provenance.

What is starting to emerge in adjacent spaces is instructive. The ML model lifecycle has seen serious academic and technical work on provenance tracking: frameworks like Atlas propose cryptographically verifiable records of artifact authenticity and lineage from training through deployment. The Supply Chain Integrity, Transparency and Trust (SCITT) architecture from the security world offers models for distributed audit ledgers. These are building blocks but they are not yet connected to product-level human-made certification.

The Risk of Doing It Wrong: Label Washing

The most significant risk in this space is what might be called label washing: applying a human-made certification as a marketing posture without the process discipline to back it up. Several of the current certification schemes, particularly self-certification models, offer little more assurance than the claimant’s own word.

For technology products, the reputational consequences of a credibility gap here can be significant. If your product claims human-made provenance and a technical audit or investigative reporting reveals that core components were AI-generated without disclosure, the trust damage is compounded: you were not only using AI, you were misrepresenting your process. This is structurally similar to greenwashing in sustainability and regulators in both the EU and the US have demonstrated willingness to act on misleading environmental claims. It would be reasonable to expect similar scrutiny to follow for AI-provenance claims as the market matures.

A defensible position in this environment is not necessarily a strict “no AI” claim – it is a transparent, documented, auditable one. Some of the most credible emerging frameworks define categories explicitly: human-written, human-led AI-assisted, AI-generated human-reviewed. The label earns trust not by excluding AI entirely, but by being specific about what role it played and verifiable about the process.

What This Means for Product Teams Now

The human-made label debate is still early-stage, and mandatory certification for digital products is not an immediate regulatory reality. But the trend lines are clear enough to act on now. Here is what a product team can reasonably do today:

- Map your AI touchpoints.

Before you can make any claim, you need to know where AI tools are involved in your product lifecycle: design, code generation, documentation, testing, data labeling, and infrastructure. Most teams have not done this systematically. - Establish a taxonomy for AI involvement.

Rather than binary AI/no-AI thinking, define categories internally: AI-assisted (human-directed, AI executes), AI-augmented (human-reviewed AI output), and AI-autonomous (AI decision, no material human review) and then apply these to each product lifecycle stage. - Start building audit trails.

If you are using agentic coding tools like Claude Code or Genie, consider whether your version control, PR review practices, and documentation adequately capture the human vs. AI contribution. This is process infrastructure and it takes time to build. - Be specific in any claims you make.

“Built with AI assistance” is more defensible than “human-made” if you cannot fully substantiate the latter. Vague claims in either direction carry more risk than specific ones. - Watch the certification landscape.

The market for AI-provenance certification will consolidate, likely around standards that focus on generative AI disclosure rather than all-AI exclusion. Early engagement with credible certification bodies, or industry working groups developing standards, positions your team ahead of the curve.

Outlook

The next months will likely bring both market consolidation around one or two credible certification standards and growing regulatory expectations around AI disclosure in digital products.

For product teams, the most useful preparation is internal: know your AI touchpoints, classify your tools by contribution level, and build review checkpoints that generate evidence rather than just approval. The goal is not to achieve a perfect “human-made” score… for most software products, that bar is neither achievable nor meaningful. The goal is to be able to make a specific, defensible claim about where humans owned the process and where machines assisted. That specificity is what the next generation of buyers will require.

Sources

BBC News / Bode Living — The race to establish an AI-free logo (March 2026)

UC Strategies — You may soon have to check this label to know if content was made by a human (2026)

WSJ / Prof. Tzuo-Hann Law — Op-ed: Made by Humans — the strategic value of human labor

Stack Overflow / JetBrains — Developer survey data on AI coding tool adoption (2025)

Faros.ai — Best AI Coding Agents for 2026 (2026)

arxiv / Atlas Framework — Atlas: A Framework for ML Lifecycle Provenance & Transparency (Feb 2025)