In the era of big data, organizations are constantly seeking innovative ways to store, manage, and analyze vast amounts of information. One such solution that has gained significant traction is the concept of data lakehouses. Combining the best features of data lakes and data warehouses, these architectural frameworks offer a scalable and flexible approach to handling diverse data sets. In this article, we delve into the fascinating world of data lakehouses, exploring their architecture, core concepts, advantages, and the impact they bring to modern data-driven enterprises.

Understanding Data Lakehouses

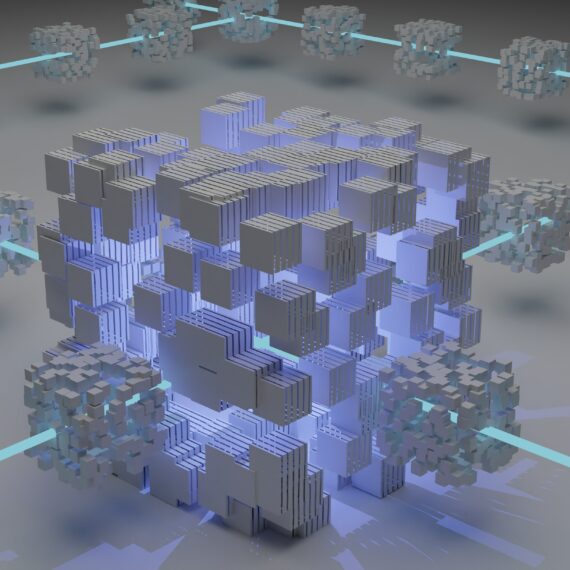

A data lakehouse is a unified data storage architecture that integrates the strengths of both data lakes and data warehouses. It aims to provide a centralized repository for storing raw, structured, semi-structured, and unstructured data while enabling robust analytics capabilities.

By combining these two paradigms, data lakehouses offer a holistic approach to data management, allowing organizations to store massive amounts of data in its native format and perform various analytics tasks efficiently.

How Does the Architecture of a Data Lakehouse Look like?

At its core, a data lakehouse architecture comprises three main components: data ingestion, data storage, and data processing. Let’s explore each of these components in detail:

- Data Ingestion

Collecting and integrating data from various sources is a crucial step in the data lakehouse architecture. This process often involves real-time data streaming, batch processing, and data integration techniques. Sources can range from transactional databases and log files to social media feeds and IoT devices, encompassing structured, semi-structured, and unstructured data. Robust data ingestion ensures that the lakehouse receives a continuous flow of data from diverse sources. - Data Storage

Data storage is a critical aspect of the data lakehouse architecture. Unlike traditional data warehouses, data lakehouses store data in its raw and untransformed state, enabling organizations to maintain a single source of truth. The data is typically stored in a distributed file system, such as Apache Hadoop Distributed File System (HDFS) or cloud-based object storage systems like Amazon S3 or Azure Blob Storage. This approach allows for scalability and the ability to handle large volumes of data. - Data Processing

Data processing involves transforming raw data into a usable format for analysis and querying. This step includes various data operations, such as data cleaning, normalization, enrichment, and aggregation. Organizations can leverage distributed computing frameworks like Apache Spark or Apache Hive to process data at scale and perform complex analytics tasks efficiently. By employing parallel processing and distributed computing, data processing in a lakehouse environment becomes faster and more scalable.

What Are the Core Concepts of Data Lakehouses?

Schema-on-Read

One of the fundamental concepts in data lakehouses is schema-on-read. Unlike traditional data warehouses that enforce a rigid schema upfront, data lakehouses allow users to apply schema at the time of data retrieval or analysis. This flexibility enables organizations to adapt to evolving data requirements and explore different data models without the need for extensive ETL (Extract, Transform, Load) processes. Schema-on-read allows for agility and exploratory analysis, facilitating the discovery of new insights.

Unified Data Model

A data lakehouse promotes a unified data model that brings together structured, semi-structured, and unstructured data under a single storage framework. This approach allows organizations to break down data silos, making it easier to perform cross-domain analytics and gain deeper insights by combining different data types. It fosters a holistic view of data and encourages collaboration across departments. With a unified data model, organizations can leverage the full potential of their data assets.

Data Governance and Security

Data governance and security are crucial aspects of data lakehouses. As data flows into the lakehouse, organizations must establish policies, standards, and controls to ensure data quality, compliance, and privacy. Implementing access controls, encryption, and monitoring mechanisms becomes vital to safeguard sensitive information and maintain regulatory compliance. With proper data governance and security measures in place, organizations can build trust and ensure the integrity of their data lakehouses.

What Are the Advantages of Data Lakehouses?

- Scalability and Flexibility

Data lakehouses provide unparalleled scalability and flexibility, allowing organizations to accommodate ever-increasing data volumes and diverse data types. With the ability to store raw data and apply schema-on-read, companies can easily adapt to changing business requirements and explore new data sources without worrying about storage constraints or upfront schema design. This scalability and flexibility enable organizations to scale their data infrastructure as their needs evolve.

- Cost-Effectiveness

Compared to traditional data warehouses, data lakehouses offer a more cost-effective solution for data storage and analytics. By leveraging cloud-based storage systems and open-source technologies, organizations can reduce infrastructure costs and pay only for the resources they consume. Additionally, the elimination of costly ETL processes and data transformations reduces both time and expenses associated with data preparation. The cost-effectiveness of data lakehouses makes them an attractive option for organizations of all sizes.

- Faster Time-to-Insights

Data lakehouses enable faster time-to-insights by providing a platform for real-time and near-real-time analytics. With the ability to ingest and process streaming data, organizations can derive valuable insights and make data-driven decisions in a timely manner. The elimination of data silos and the ease of performing complex analytics tasks contribute to faster insights and improved business agility. By reducing the time it takes to transform raw data into actionable insights, data lakehouses empower organizations to be more responsive in today’s fast-paced business environment.

- Advanced Analytics Capabilities

Data lakehouses empower organizations with advanced analytics capabilities, including machine learning, artificial intelligence, and predictive analytics. By integrating analytics frameworks like Apache Spark and leveraging the data lakehouse’s unified data model, companies can derive deeper insights, build sophisticated models, and unlock new opportunities for innovation and competitive advantage. The ability to leverage advanced analytics techniques on a diverse range of data types gives organizations a competitive edge in extracting value from their data.

Closing Thoughts

The rise of data lakehouses has revolutionized the way organizations manage and analyze data. By combining the strengths of data lakes and data warehouses, these architectural frameworks provide a scalable, flexible, and cost-effective solution for storing and processing diverse data sets. With the ability to handle massive data volumes, support real-time analytics, promote cross-domain collaboration, and enable advanced analytics capabilities, data lakehouses are poised to become a cornerstone of modern data-driven enterprises.