With the introduction of Google Analytics 4 (GA4), Google restructured not only their Google Analytics user interface but also the data format of data acquired by Google Analytics. As of July 2023, GA4 is now mandatory for data handling with Google Analytics while Universal Analytics is being shut down and won’t be gathering data any longer unless you have a Google Analytics 360 account. In this case, the support and functionality for Universal Analytics will end in July 2024.

What’s the impact?

This has a major impact on monitoring and reporting solutions, for example dashboards used in Google Looker Studio, Microsoft Power BI or other visualization tools. Fields and KPIs you formerly used in your dashboard may now be calculated differently or may even not be available anymore in the first place.

What’s happening with data and dashboards?

Dashboards need to be adapted to the new GA4 data schema. But since GA4 is still in development and changes on a regular basis, it is not set that your dashboards can be so easily updated. Missing KPIs, limits in quotas or bugs in the API lead to some dashboards missing functionality and usability. And this is where data preparation of GA4 data via Google BigQuery comes in. This article will explain to you the necessity of using GA4 with BigQuery.

What is Google BigQuery and why is it so popular?

Google BigQuery is Google’s serverless data warehouse solution. It is part of the Google Cloud infrastructure and allows users to handle large amounts of data in a fast and cost-efficient manner. It even allows users to have a look at streaming data, making it possible to execute calculations on real-time data.

Limitations of Google Analytics 4 Direct Connection to Google Looker Studio

Visualization tools like Google Looker Studio (GLS) provide a direct connection setup to a Google Analytics 4 property. So, establishing a link between GA4 and GLS is a very easy thing to do. However, using the direct connector is not advised if you want reliable and fast-to-use dashboards.

- This is firstly due to the lag of missing fields and KPIs. For example, metrics like bounce rate or average session duration are not available in GLS while using the direct connector.

- Secondly, be aware of the quota limitations by the API. Depending on the amount of data and use of the dashboard, it may occur that you hit the quota limitation essentially breaking your dashboard by displaying error messages instead of data.

- Furthermore, and this might be the biggest issue encountered so far, is when it comes to the data itself. After some testing and comparing the GA4 data in GLS with the GA4 user interface, some major discrepancies were noticed, like the total amount of sessions being 50.000 in GLS and around 10.000 in GA4 user interface. This was caused by a manual set filter to only display data from a certain domain, using the hostname dimension. I was able to replicate this behavior in a direct API request outside of GLS. So, it appears that the API has some issues when handling at least some filters on some dimensions. A dashboard filled with data that you cannot be sure of is correct, is no use for reporting.

- And lastly, GLS is just very slow to use with a native GA4 connection. You have to keep in mind that whenever you change the parameters in your dashboard, a request is sent to GA4, and it makes real-time calculations based on your request. Depending on the amount of data it must handle and go through, this might take several minutes.

Using Google Big Query and SQL to Create Google Analytics 4 Dashboards

But how can you avoid all these issues the connector is causing? You might have guessed it by now: Google BigQuery. GA4 data that is exported to BigQuery is raw data. In the GA4 user interface, all the values for sessions, pageviews, etc. are pre-calculated assumptions. But in BigQuery, you get the raw event-based data you can make your own calculations on, allowing you to get results that are more accurate to the real amount of , for example, sessions. The user interface and API show only estimations of sessions. The downside is: you must do the calculations by yourself. Using SQL, you can access even the nested JSON fields in the raw GA4 data schema.

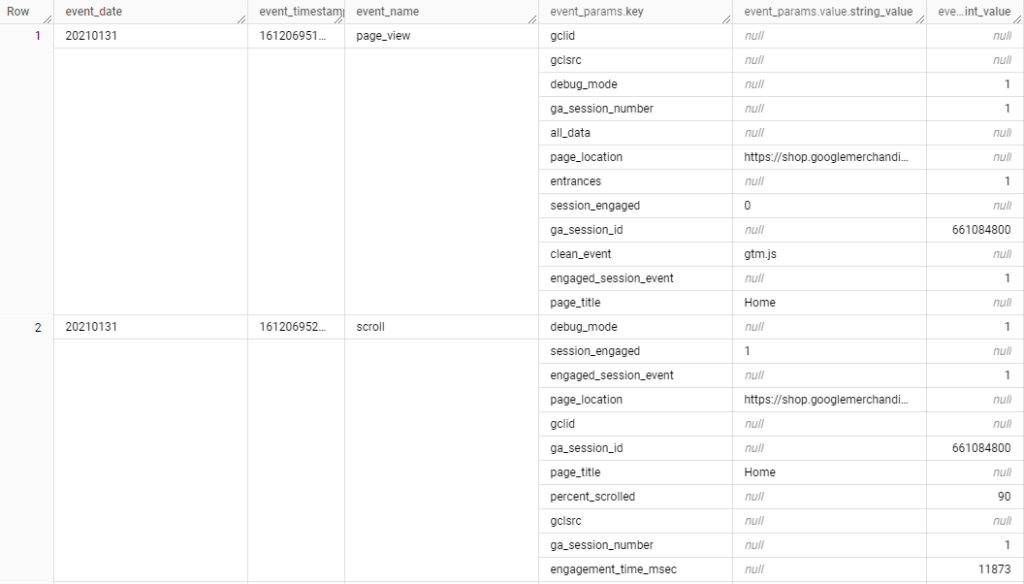

Here is an example of how raw data from Google’s public data set about the merchandise store looks like:

Using BigQuery means working in a database and in this case, it means working with SQL. But with this, you are now able to not only workaround the quota limits, but to also create custom reports with KPIs, GA4 does not offer you by itself. And the best part is that when you store your calculated and prepared data in a table in BigQuery, the results are not only limited to the actual data you need for your dashboard, but it also enables you to cache the results leading into a major speed and performance boost in GLS. By using BigQuery as your data source, you can avoid all the issues the GA4 connection is facing right now.

How to Connect GA4 With Bigquery

Connecting GA4 with Google BigQuery is a very easy thing to do. In your GA4 property, go to “Admin”. In there, you find the section “Product Links”. When you click on “BigQuery Links” you can set up a connection between your Google Cloud Project and your GA4 property. Be aware that your property can only be linked to one Google Cloud Project! Once a connection is established, your GA4 data is exported to BigQuery. When you have added a billing account to your Google Cloud Project you can even activate the export of streaming data, which basically means export of real time data.

How to Backfill GA4 Data in Bigquery

By default, the GA4 data is imported to BigQuery only from the date you first setup the connection in GA4 itself. Without a paid third-party connector, like Supermetrics, it is not possible to get historical data in BigQuery and do a complete backfill of your GA4 data.

How Much Does the GA4 Bigquery Connection Cost?

When doing data preparation and calculations in BigQuery, it is unavoidable to add a billing account. However, there is a way to use BigQuery for free, at least for testing and learning purposes.

By creating a Google Cloud project, you can setup a BigQuery sandbox. This sandbox grants 10 GB of active storage and 1 TB of processed query data each month. It also enables a $300 account balance to start with. But in the sandbox, there is no streaming data export and most importantly, all data sets have a table expiration time of 60 days, meaning you can only collect data for two months. If you want to set the expiration time to “never”, you need to add a billing account.

Google Bigquery Is a Cost-Efficient Cloud Data Warehouse

BigQuery is a very cost-efficient way to process large amounts of data. There is no exact calculation for pricing but as a rule-of-thumb, 1 TB of query size costs about $6.25 per query, or 10 GB query size are about 6.25 cents. To get a query size of 1 TB, you need to have an enormous amount of data.

Using aggregations and partitions in your querys and tables will reduce costs though, so keep this in mind.For additional information about pricing, be sure to check out the blog post from the official Google Cloud Blog.

https://cloud.google.com/bigquery/docs/editions-intro

Final Thoughts

In conclusion, using data preparation of GA4 data in BigQuery for dashboarding and reporting is mandatory. Since GA4 is still a product in development, it still has issues and features missing. With BigQuery, you can avoid most of the common issues with the GA4 API and calculate your data in a very cost-effective way.