Update 26.02.2024: European AI Office

Implementation of the EU AI Act is progressing: European AI Office starts its work. The aim of the office is to promote the development and application of trustworthy artificial intelligence and at the same time protect against the risks of this technology.

Update 01.02.2024: Regulation of “general purpose AI”

New information about the regulation of “general purpose AI” has been added to the article. General purpose AI is now classified under a new and so for fith risk categorie “potential high-risk”.

Update 12.12.2023: Provisional Agreement on Proposal

Following the proposal in 2021, the trilogue has now been successfully concluded after some 36 hours of negotiations spread over three days. The result clearly shows that security and data protection have played a crucial role in the negotiations.

Most important points of the Provisional Agreement on Proposal (09/12/23):

- Rules on high-impact general-purpose AI models which can cause systemic risk in the future, high-risk AI models

- Revised system of governance (AI Office, scientific panel of independent experts, AI Board)

- Extension of list of prohibitions (possibility for public authorities to use biometric identification AI)

- Better human rights protection through introduction of fundamental rights impact assessment obligation prior to putting AI systems into use.

Note: The article was written before the EU agreed on the AI act but is regularly updated.

Abstract

The proposed EU AI Regulation (AI Act) stands as a flagship project of the EU, expected to significantly impact the deployment and use of artificial intelligence (AI) within and beyond its borders. Companies already utilizing, developing, or considering the potential of AI should engage with the AI Act now, despite the ongoing legislative process, to avoid extensive post-development documentation. This article aims to help understand the possible AI applications within companies, introduces the current AI Regulation draft*, and emphasizes the importance of early recognition of its implications. It provides insights into the regulatory approach, structure, and current status of the legislative process, outlines foreseeable challenges, and suggests solutions based on proven strategies from other compliance frameworks such as GDPR (General Data Protection Regulation).

AI applications are widespread across industries, improving business operations through intelligent predictions in logistics, autonomous driving, predictive maintenance, and AI-enabled processes. Despite AI’s potential, skepticism remains, largely due to complex legal risks associated with data processing, ethical concerns, and a lack of familiarity among decision makers with AI applications and regulations.

To incentivize AI development and dissemination in the EU, the European Commission aims to establish a legal framework for “trustworthy” AI. Following 3-day ‘marathon’ negotiations, the Council presidency and the European Parliament’s negotiators have reached a provisional agreement on the proposal on harmonized rules on artificial intelligence (AI), the so-called AI act.

The term “artificial intelligence” lacks a unified definition, but the AI-Act introduces a legal definition, shaping its scope.

Legislative Procedure, Objectives, Purpose of the New Law

The EU Commission initiated the regulation in response to calls from the EU Parliament and Council. The draft emphasizes the EU’s ambition to take a global leadership role in developing safe and ethically sound AI applications. The benefits of AI for society and the environment are

highlighted, with key sectors such as environmental protection, health, finance, and mobility being particularly emphasized.

Four main goals of the envisioned AI legal framework are identified:

– Ensuring that AI systems placed on the Union market are safe and uphold the fundamental rights and values of the Union.

– Creating legal certainty to promote investments in AI and its innovativeness.

– Strengthening governance and enforcement mechanisms for existing laws to safeguard fundamental rights and security requirements for AI systems.

– Facilitating the development of an internal market for compliant, secure, and trustworthy AI applications, while preventing market fragmentation.

Key discussion points of the trilogue include the definition of AI, the list of prohibited AI systems, and the classification and requirements for high-risk AI. Furthermore, biometric data use and requirements and obligations for LGAIMs (Large Generative Artificial Intelligence Models)/LLMs (Large Language Models)/GPAIs (General-purpose artificial intelligence) have been primarily discussed.

Despite being in the negotiation phase, the regulatory framework and central requirements are already discernible.

The act defines the term “artificial intelligence system” broadly, encompassing various degrees of autonomy and the ability to generate results influencing the physical or virtual environment. The regulation’s personal scope extends to all actors in the AI value chain, including providers and users of AI systems. The spatial scope determines the regulation’s geographical applicability.

Risk-Based Approach

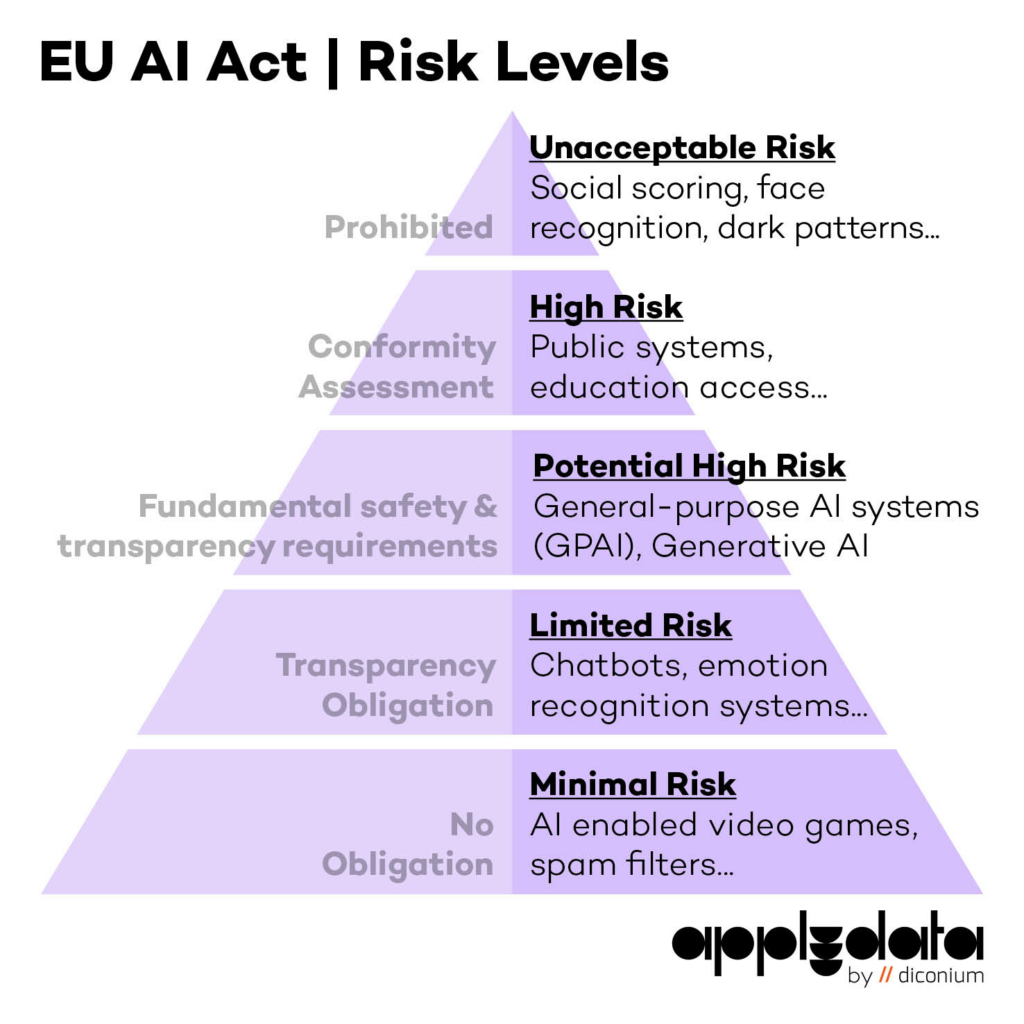

The AI Act introduces a risk-based approach, categorizing AI systems into five risk classes: minimal, limited, potential high, high and unacceptable risk. The higher the risks associated with the use of AI, the higher the regulatory requirements for the respective AI system.

Prohibited practices are outlined, addressing AI systems with unacceptable risks to fundamental rights. High-risk AI systems, posing significant risks to health, safety, or fundamental rights, are a central focus of the regulation, with specific criteria for their identification.

After extended deliberation, the AI Act now also regulates “general purpose AI” that has been trained on a large amount of data in a two-stage approach. General-purpose AI can perform tasks of different types and can be integrated into a variety of downstream AI systems (e.g. the GPT model on which ChatGPT is based).

For general general-purpose AI (first stage), transparency requirements are primarily required.

For general-purpose AI with “high impact” that causes a “systemic risk” (second level), a model evaluation and tests must be carried out to assess, mitigate and monitor potential safety risks at EU level. A systemic risk is assumed if the general-purpose AI has been trained with a cumulative computing power of more than 10^25 floating-point operations.

Categories for low-risk and limited-risk AI systems are also defined.

Data protection considerations are acknowledged, especially regarding the use of AI with large datasets, emphasizing the need to comply with existing data protection laws. The regulation aims to complement, rather than replace, current data protection regulations, leading to potential synergies for businesses already adhering to GDPR requirements.

The AI regulation encompasses a comprehensive catalog of requirements and obligations for providers, users, introducers, and distributors of AI systems. The focus is on regulating high-risk AI, and various actors may be considered providers, especially if they make significant

changes to existing AI systems. Trilogue negotiations on Article 28 of the AI Act are crucial, as being classified as an AI provider comes with extensive compliance requirements.

The requirements and obligations mainly target high-risk AI. Provider obligations include compliance with specific articles of the AI Act, establishing a quality management system, retaining logs, conducting a conformity assessment procedure, and collaborating with national authorities. Users must ensure input data aligns with the AI system’s purpose, monitor operations, and retain logs.

A key aspect of AI regulation is mitigating risks to users and data subjects. This includes establishing risk management systems, designing AI systems transparently, using high-quality data, following data governance and management procedures, and providing technical documentation.

Nevertheless, it is clear that the risk-based approach to regulating artificial intelligence has met with broad approval within the EU institutions.

What Should Companies Do?

Companies should initiate early preparations to address the challenges posed by the AI Act. This proactive approach is crucial for a successful readiness for the regulation’s enforcement. Non-compliance with the rules will lead to fines ranging from €7.5 million or 1.5% of global turnover to €35 million or 7% of global turnover, depending on the infringement and size of the company. This section provides a brief overview of actions that companies involved in AI development or usage can take now. It then discusses how the AI Act requirements can be integrated into existing processes, some of which are already mandated by data protection laws. While aligning with data protection laws, the AI Act also introduces new challenges.

(1) Form an Interdisciplinary Team:

Increase awareness and sensitivity to AI and associated risks across all company departments. Establish a team comprising representatives from various departments, including legal and data management, to handle compliance.

(2) Identify AI Assets:

Determine the applicability of the AI Act draft to company software and systems. Create directories/records across all AI systems and list both current or planned uses in the company.

(3) Perform Risk Classification:

Develop a risk taxonomy aligned with AI Act risk classes. Classify deployed and developed AI applications into risk groups, deriving corresponding requirements and obligations from the AI Act.

(4) Conduct Conformity Assessment Procedures:

Prioritize adherence to conformity assessment procedures when working with or on AI applications. Use the assessment process as a starting point for effective risk management and mitigation associated with AI.

(5) Integration with Data Protection Processes:

Embed AI Act compliance into existing data protection workflows and documents, such as the Record of Processing Activities (RoPA). Leverage synergies with data protection structures to ensure compliance with diverse AI Act requirements. The integration of compliance with the planned regulation into existing data protection processes and documents, such as the RoPA, is a strategic approach. For instance, the required risk management system under Article 9 of AI Act can be linked to the RoPA. A subsection “Technical Documentation under Article 11 AI Act” can be added to the RoPA, incorporating detailed descriptions of the risk management system. This documentation should include the identification of responsible individuals for risk management and a detailed risk analysis for all high-risk AI systems. The technical documentation, including the risk management system, should be completed before the AI is put into operation.

In addition to existing documentation, it is advisable to create a separate document outlining how other requirements of the AI Act are being met. This document, created during AI development and prior to deployment, provides an overview of how AI Act requirements have been implemented, making it easier to track in the future. This document should cover mandatory technical documentation, design considerations for high-risk AI, compliance with automatic logging requirements (Article 12 of the AI Act), and how transparency and user training obligations (Article 13 of the AI Act) were addressed.

Furthermore, synergies can arise regarding Article 10 AI Act requirements for the use of data for training purposes. These data can be included in existing data catalogs, with a supplementary document documenting compliance with its requirements. Specific sections within the document can detail how data governance and management procedures are implemented, ensuring adherence to the regulations of the AI Act.

Additionally, to address the dual obligations arising from both regulations, the AI Act and the GDPR, it is crucial to establish a legal basis for data processing. The use of pseudonymization, anonymization, or synthetic data is recommended to mitigate data protection risks associated with AI. Special attention should be given to informing individuals about automated decision-making, including profiling, in accordance with GDPR requirements (Articles 13 and 14).

Conclusion

In conclusion, the regulation is intended to provide a harmonized regulatory framework for the use of artificial intelligence and to provide opportunities for the modernization of all sectors.

The AI Act will not become fully enforceable until two years after its entry into force – likely in 2026 – with some exceptions for specific provisions such as prohibited AI systems and AI systems classified as GPAI, which will be applicable after 6 and 12 months, respectively.

But now that political agreement has been reached, companies should be proactively engaging with the provisions of the AI Act and making preparations to ensure compliance by the applicable deadlines.

The focus on established compliance processes and the proactive handling of the challenges of the AI Act, especially in the handling of data, therefore requires, in particular, the consideration of the GDPR principles. With careful preparation and expertise, companies can effectively manage the complexity of AI regulation.